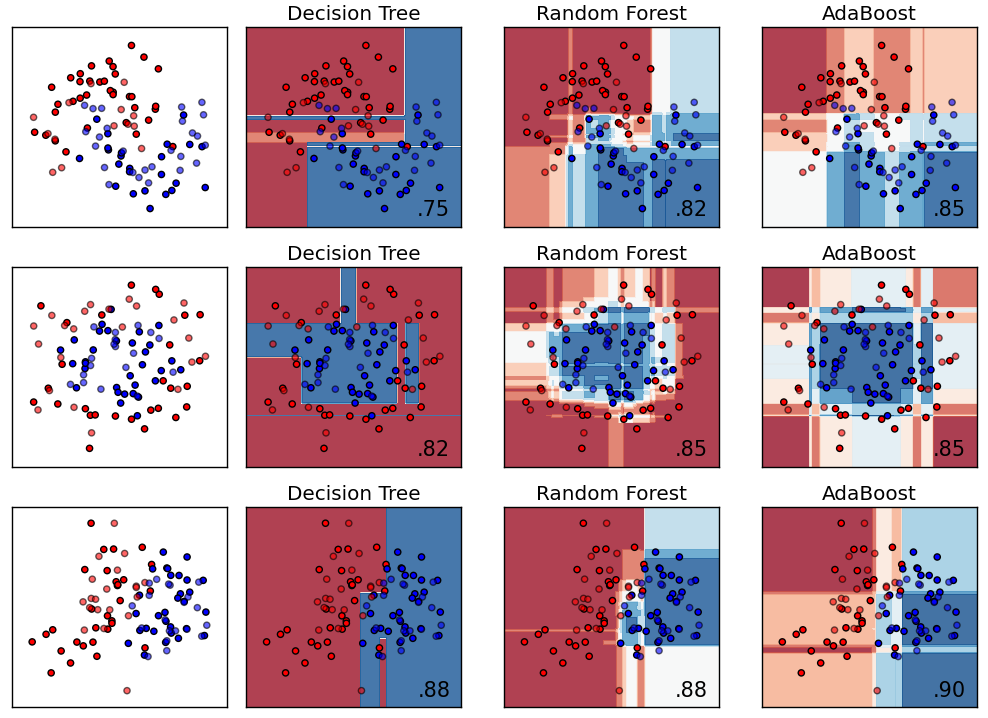

The results showed that the Random Forest method can produce the best accuracy rate of 76.47%, followed by Neural Network and Support Vector Machine algorithms with an accuracy rate of 74.12% on each. Therefore, traditional decision trees diverge into a bagging version (i.e., random forest) and a boosting version (i.e., gradient boost decision tree). In this respect, machine learning algorithms, such as support vector machine (SVM) and random forest (RF), have been on the spotlight for tree species. Model performance measurements using matrix confusion include accuracy, sensitivity (recall), and precision. Adding a differentiable neural decision forest to the neural network can generally help exploit the benefits of both models. The training and testing dataset uses a split proportion of 80:20 of the total available datasets. The dataset consisted of 430 respondents from private universities in Vietnam taken and adapted from previous research. This study aims to compare the level of accuracy between the Random Forest method, Support Vector Machine and Neural Network for the classification of student satisfaction with the services provided by Higher Education. Student satisfaction with the quality of the university services is one of the factors that affect the academic performance of the university. Various types of services and facilities are provided by universities for students, such as academic services, student services, and infrastructure including information technology services. This technical report in the coming months with updated results.Students are customers of a higher education institution. This suggests that further gains in both scenarios may be realized viaįurther combining aspects of forests and networks. Sizes, whereas deep nets performed better on structured data with larger sample In general, we found forests toĮxcel at tabular and structured data (vision and audition) with small sample Ourįocus is on datasets with at most 10,000 samples, which represent a largeįraction of scientific and biomedical datasets. In this work, we classify the components of successful GCNN prediction models and analyze the. Today, graph convolutional neural networks (GCNNs) have become the prevailing models in the traffic prediction literature since they excel at extracting spatial correlations. Of tabular data settings, as well as several vision and auditory settings. Traffic prediction is a spatiotemporal predictive task that plays an essential role in intelligent transportation systems. Empirically, we compare these two strategies on hundreds This formulation allows for a unified basic understanding of the relationshipīetween these methods. Specifically, the representation space that theyīoth learn is a partitioning of feature space into a union of convex polytopes.įor inference, each decides on the basis of votes from the activated nodes. The results showed that the Random Forest method can produce the best accuracy rate of 76.47, followed by Neural Network and Support Vector Machine algorithms. For classification tasks, the output of the random forest is the class selected by most trees.

Conceptually, we illustrate that both can be profitably viewed as The fundamental difference between a random forest algorithm and a Decision Tree is that Decision Trees are graphs that. Random forests or random decision forests is an ensemble learning method for classification, regression and other tasks that operates by constructing a multitude of decision trees at training time.

Two strategies using the most contemporary best practices has yet to be However, a careful conceptual and empirical comparison of these Furthermore, because of the tree structure of the neural network, the number of parameters to be learned is quite modest compared to the number of nodes. Of classifiers on one or two different domains (e.g., on 100 different tabularĭata settings). Many papers have empirically compared large numbers Download a PDF of the paper titled When are Deep Networks really better than Decision Forests at small sample sizes, and how?, by Haoyin Xu and 11 other authors Download PDF Abstract: Deep networks and decision forests (such as random forests and gradientīoosted trees) are the leading machine learning methods for structured and

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed